Companies using Google BERT

We have data on 391 companies that use Google BERT. The companies using Google BERT are most often found in United States and in the Information Technology and Services industry. Google BERT is most often used by companies with >10000 employees and 200M-1000M dollars in revenue. Our data for Google BERT usage goes back as far as 1 years and 10 months.

Who uses Google BERT?

| Company | Pure Storage |

| Website | purestorage.com |

| Country | United States |

| Revenue | 1B-5B |

| Company Size | 5000-10000 |

| Company | SAP SE |

| Website | sap.com |

| Country | Germany |

| Revenue | >10B |

| Company Size | >10000 |

| Company | Accenture PLC |

| Website | accenture.com |

| Country | Ireland |

| Revenue | >10B |

| Company Size | >10000 |

| Company | Infosys Ltd |

| Website | infosys.com |

| Country | India |

| Revenue | >10B |

| Company Size | >10000 |

| Company | Hitachi Vantara |

| Website | hitachivantara.com |

| Country | United States |

| Revenue | 5B-10B |

| Company Size | 5000-10000 |

| Company | Website | Country | Revenue | Company Size |

|---|---|---|---|---|

| Pure Storage | purestorage.com | United States | 1B-5B | 5000-10000 |

| SAP SE | sap.com | Germany | >10B | >10000 |

| Accenture PLC | accenture.com | Ireland | >10B | >10000 |

| Infosys Ltd | infosys.com | India | >10B | >10000 |

| Hitachi Vantara | hitachivantara.com | United States | 5B-10B | 5000-10000 |

Target Google BERT customers to accomplish your sales and marketing goals.

Google BERT Market Share and Competitors in Language Models

We use the best indexing techniques combined with advanced data science to monitor the market share of over 15,000 technology products, including Language Models. By scanning billions of public documents, we are able to collect deep insights on every company, with over 100 data fields per company at an average. In the Language Models category, Google BERT has a market share of about 0.6%. Other major and competing products in this category include:

Language Models

What is Google BERT?

Google BERT (Bidirectional Encoder Representations from Transformers) is a deep learning language model based on the transformer architecture. It is used for NLP (Natural Language Processing) pre-training and fine-tuning, increases search engine's understanding of human language. It is an "encoder-only" transformer architecture that consists of three modules i.e., embedding, encoders and un-embedding. It is pre-trained using unlabelled data on language modelling tasks. BERT models can therefore consider the full context of a word by looking at the words that come before and after it - particularly useful for understanding the intent behind search queries.

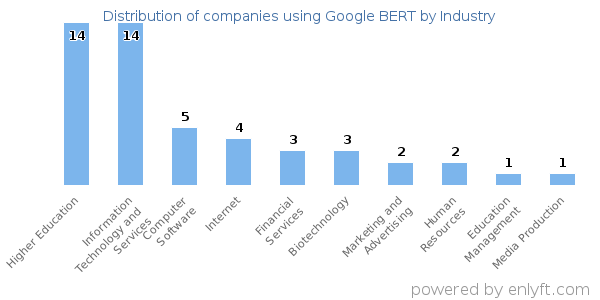

Top Industries that use Google BERT

Looking at Google BERT customers by industry, we find that Information Technology and Services (19%), Higher Education (17%), Computer Software (13%) and Internet (5%) are the largest segments.

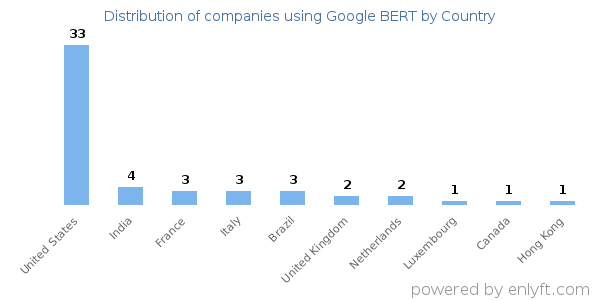

Top Countries that use Google BERT

48% of Google BERT customers are in United States and 12% are in India.

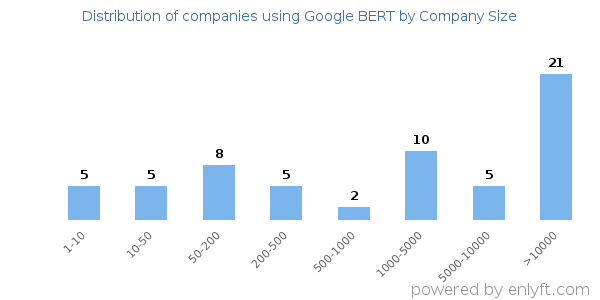

Distribution of companies that use Google BERT based on company size (Employees)

Of all the customers that are using Google BERT, a majority (50%) are large (>1000 employees), 23% are small (<50 employees) and 25% are medium-sized.

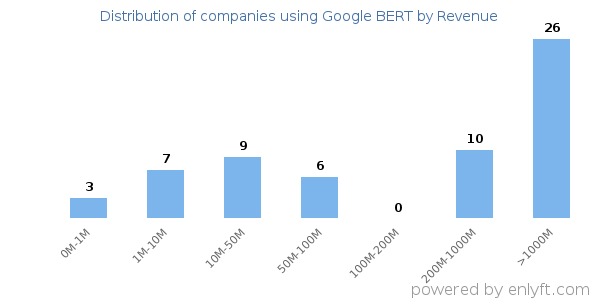

Distribution of companies that use Google BERT based on company size (Revenue)

Of all the customers that are using Google BERT, 30% are small (<$50M), 8% are medium-sized and 23% are large (>$1000M).