Companies using Hugging Face Transformers

We have data on 5,312 companies that use Hugging Face Transformers. The companies using Hugging Face Transformers are most often found in United States and in the Information Technology and Services industry. Hugging Face Transformers is most often used by companies with 50-200 employees and 1M-10M dollars in revenue. Our data for Hugging Face Transformers usage goes back as far as 1 years and 7 months.

Who uses Hugging Face Transformers?

| Company | Waracle |

| Website | waracle.com |

| Country | United Kingdom |

| Revenue | 50M-100M |

| Company Size | 200-500 |

| Company | EPAM Systems Inc |

| Website | epam.com |

| Country | United States |

| Revenue | 1B-5B |

| Company Size | >10000 |

| Company | Globant |

| Website | globant.com |

| Country | Luxembourg |

| Revenue | 1B-5B |

| Company Size | >10000 |

| Company | Master Electronics |

| Website | masterelectronics.com |

| Country | United States |

| Revenue | 200M-1000M |

| Company Size | 500-1000 |

| Company | Coursera |

| Website | coursera.org |

| Country | United States |

| Revenue | 200M-1000M |

| Company Size | 1000-5000 |

| Company | Website | Country | Revenue | Company Size |

|---|---|---|---|---|

| Waracle | waracle.com | United Kingdom | 50M-100M | 200-500 |

| EPAM Systems Inc | epam.com | United States | 1B-5B | >10000 |

| Globant | globant.com | Luxembourg | 1B-5B | >10000 |

| Master Electronics | masterelectronics.com | United States | 200M-1000M | 500-1000 |

| Coursera | coursera.org | United States | 200M-1000M | 1000-5000 |

Target Hugging Face Transformers customers to accomplish your sales and marketing goals.

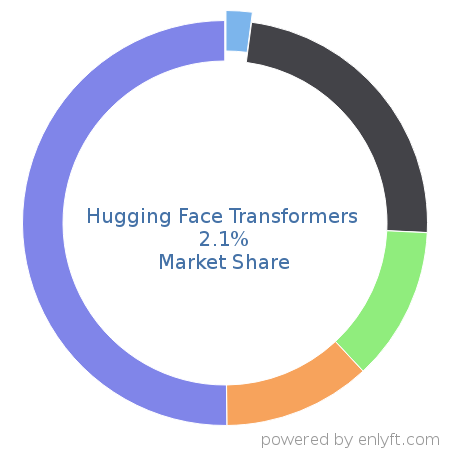

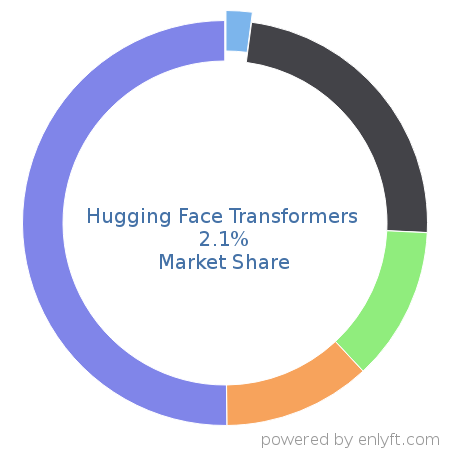

Hugging Face Transformers Market Share and Competitors in Machine Learning

We use the best indexing techniques combined with advanced data science to monitor the market share of over 15,000 technology products, including Machine Learning. By scanning billions of public documents, we are able to collect deep insights on every company, with over 100 data fields per company at an average. In the Machine Learning category, Hugging Face Transformers has a market share of about 2.1%. Other major and competing products in this category include:

Machine Learning

What is Hugging Face Transformers?

Hugging Face Transformers is an open-source framework for deep learning that provides APIs and tools to download state-of-the-art pre-trained models and further tune them to maximize performance. Using pretrained models can reduce compute costs, carbon footprint and save the time and resources required to train a model from scratch. These models support common tasks in different modalities, such as text classification, named entity recognition, question answering, language modeling, summarization, translation, multiple choice, table question answering, optical character recognition, information extraction from scanned documents, video classification, automatic speech recognition and audio classification, image classification, object detection and segmentation. Transformers support framework interoperability between PyTorch, TensorFlow and JAX.

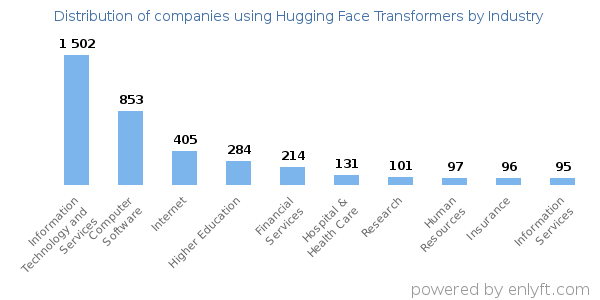

Top Industries that use Hugging Face Transformers

Looking at Hugging Face Transformers customers by industry, we find that Information Technology and Services (28%), Computer Software (16%), Internet (8%) and Higher Education (5%) are the largest segments.

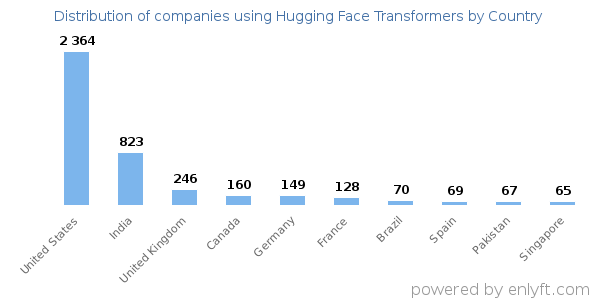

Top Countries that use Hugging Face Transformers

44% of Hugging Face Transformers customers are in United States and 15% are in India.

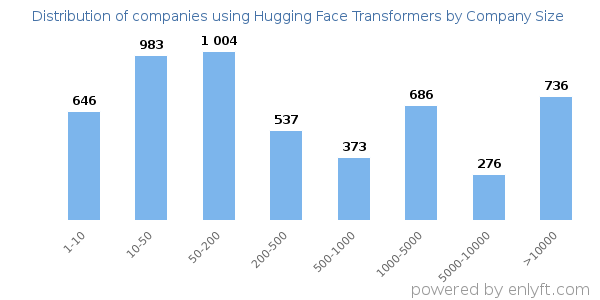

Distribution of companies that use Hugging Face Transformers based on company size (Employees)

Of all the customers that are using Hugging Face Transformers, 31% are small (<50 employees), 36% are medium-sized and 32% are large (>1000 employees).

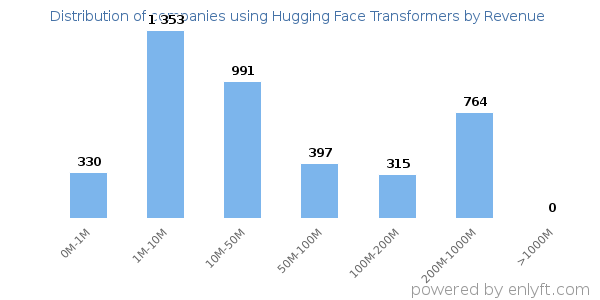

Distribution of companies that use Hugging Face Transformers based on company size (Revenue)

Of all the customers that are using Hugging Face Transformers, a majority (52%) are small (<$50M), 15% are large (>$1000M) and 8% are medium-sized.